In the past, we treated testing or quality assurance in IT delivery as something akin to insurance. We test as much scope as possible in traditional waterfall delivery before releasing it into production so that the testing team can “assure” quality. If something goes wrong, the fault lies with the assurance gone wrong. From a delivery team perspective, we put the fault at the feet of the testing team, and after all that is why we paid for their service. In case there is another vendor involved for testing we could even blame the cost of poor quality on that vendor as “clearly” they should have found it during testing. So the cost of testing could be seen as the insurance premium paid by the delivery team so that when something goes wrong we can call on that insurance to put the cost at the feet of the testers.

The world has materially changed and IT delivery works with different paradigms now. I had some fascinating conversations over the last few months that showed me how testing and quality engineering are following a different paradigm now. It is economic risk management. Let me explain what I have learned.

Let’s start with the scenario. Assume we can identify the whole scope of an application, all the features, all the logical paths through the application, and all the customer/product/process permutation. That will give us 100% of the theoretically testable scope. If you accept 100% risk, you could go live without any testing…well you wouldn’t, but theoretically that would just be maximum risk acceptance. So how do you define the right level of testing then?

Here is the thought process:

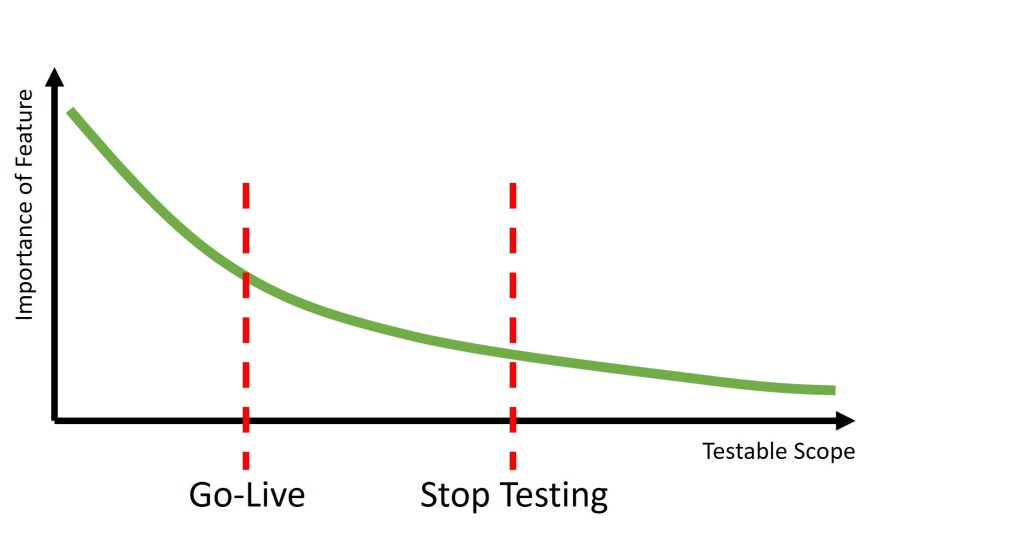

Let’s assume we can align all the testable scope from most important to least important (by business criticality, customer value, or whatever else is the right criteria for your company). You can then define two critical points on that continuum:

- The point at which you have done enough testing that the risk of go-live is acceptable

- The point at which the cost of further testing is higher than the value associated with the remaining tests -> e.g. the point at which we should stop testing

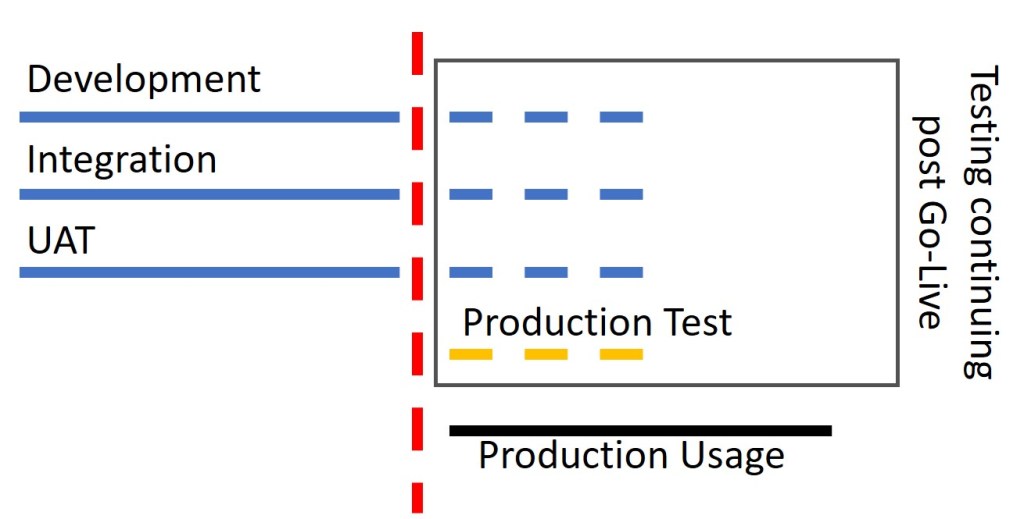

You might notice that the two points are not the same, which means testing does not have to stop at Go-Live. The idea of continuing testing after the release is not new. Some companies have always used production for some testing that wasn’t possible in non-production environments.

What we are saying here is that you might decide to go live due to the business benefits, but that you will continue testing in parallel with stakeholders using the application in production to flush out lower-risk problems. The idea is that if a user beats us to find a problem that the impact is acceptable. Less frequently used features or lower-value features might qualify here for post-go-live testing, you wouldn’t want to hold back high-value features from going live if an untested feature is unlikely to be used in production before we get to test it.

There are two thoughts here that would have been heresy when I started testing nearly 20 years ago:

- You can plan to go live before you complete testing

- Testing continues after go-live to find defects faster than the user does

In Agile training, we speak about the need to prioritise defect work with features; making the choice of allowing a defect to exist longer if I have higher value features to be delivered. The way I describe testing in this blog is following the same thought process. We want to maximise value and economically evaluate our options.

I am excited by this economic testing framework and encouraged by some of the conversations I have been able to have in support of this idea. People have inherently used similar ideas when they ran behind on testing or when fix-forward decisions were made. Formalising this thought process and consciously planning with it will create additional value for organisations.

The implications are clear to me: Testing has moved from being a phase to being an ongoing endeavor. Given the limited capacity, prioritisation calls need to be made ongoingly on where to invest the capacity; in the pre-release phase or in the post-release phase, on further testing existing features or on testing newly created one, more testing on high-value features or doing a first round of testing on lower-value features. An economic model based on risk should drive this, not the need to achieve a certain test coverage across the board. What testing strategy we follow should be conscious business decision, not an IT delivery decision.

I am sure you will hear more about this modern take on testing and risk management in the future.