Almost every time I talk to executives about AI, the conversation eventually lands in the same place: workforce impact.

Not immediately, of course. We usually start with the usual things. Productivity. Cycle time. How many people a team might “need” if the tools keep improving. Somebody will mention an impressive demo, followed by “Why cant we just?”. Somebody else will mention risk. And then, usually with a slightly awkward pause, someone asks the real question: what happens to all the engineers?

Will AI take all the jobs? Will we all sit at home while an agent writes the code, tests it, deploys it and perhaps also sends us a mildly passive-aggressive status update?

I do not think that is the most useful way to frame the problem.

For one thing, I have yet to walk into an organisation and hear somebody say: “We have no problems left to solve, our systems are easy to manage, and quality is consistently excellent.” Quite the opposite. Most large enterprises are still carrying a very long backlog of technology problems, quality issues, delivery friction and operational complexity. Even if every productivity claim about AI turns out to be broadly true, there is still plenty of work to do.

And in the brownfield reality where most large organisations actually live, the results are rarely as magical as they look in demos where AI builds Snake for the four millionth time. Can you imagine how much energy and PE capital has been spent on recreating Snake?

So I do not think the near-term problem is that we suddenly run out of work for software engineers, especially the good ones. I think the more interesting problem is that we may be quietly dismantling the way software people become good in the first place.

The ladder was already sinking

A few years ago I started talking about what I called a sinking skill ladder.

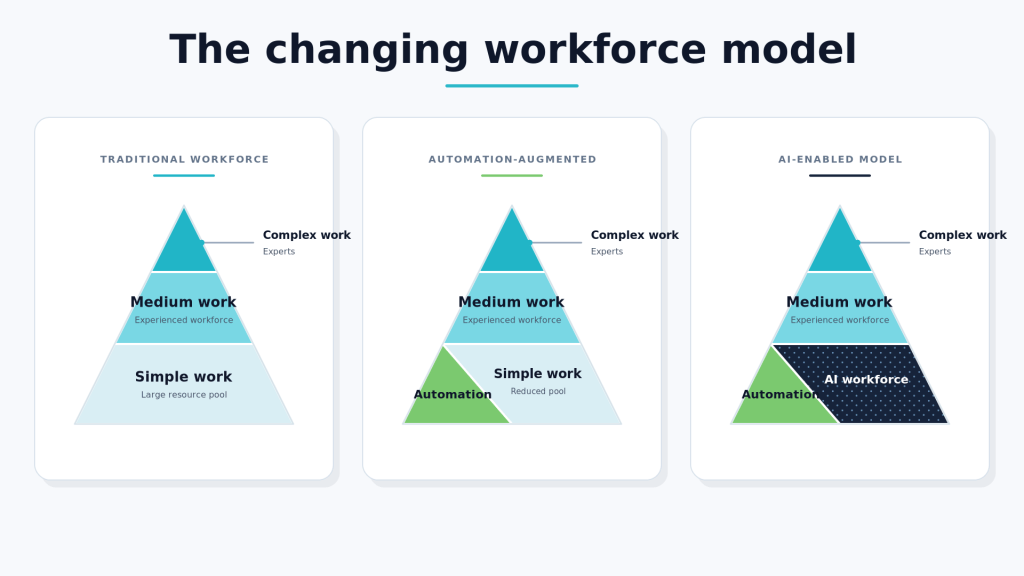

In many organisations, the traditional progression model had already become harder to sustain. A lot of entry-level or more routine work had moved offshore. Teams became thinner in some locations. The work left behind often skewed toward coordination, oversight, integration and stakeholder management. That created a familiar problem: if fewer people get to do the foundational work, where exactly do future seniors come from?

It was already a bit wobbly.

AI is now making that worse, because the bottom part of the ladder is exactly where automation is most tempting. The simpler, narrower, more repeatable tasks are the ones organisations can most easily give to AI tools and agents. From a local optimisation perspective, that makes complete sense.

But ask yourself what those tasks used to do.

They did not just produce output. They also created learning opportunities.

A junior engineer fixing a small defect, writing a test, tracing through a logging problem, or wrestling with a deployment pipeline is not just “doing low-value work”. They are building up valuable experience. They are learning how software behaves when it meets reality. They are beginning to form the mental models that later let them design systems, challenge bad assumptions, or spot when a confidently wrong AI-generated answer is dangerously plausible.

That is why the current shape of the market worries people. There is already quite a lot written about pressure on entry-level roles, and it is not hard to see why. If organisations reduce junior hiring while expecting more experienced people to supervise AI-assisted delivery, that may look efficient in the short term. But it is not a sustainable talent model. You cannot only hire senior developers; someone needs to create them. IBM is bucking the trend by starting by increasing junior hiring again, but that is not where most of the market seems to be heading. We should worry about what this means for our organisations going forward.

Not “will developers disappear?”, but “how do we develop developers when the old apprenticeship model gets hollowed out?”

What could the new AI-native entry level experience look like

I do not think the answer is to force juniors through a nostalgic version of software engineering that pretends AI does not exist. That would be silly. And after all, I did not start my career with punch-cards. although Cobol came pretty close.

But I also do not think the answer is to let people become prompt managers with no grounding in how systems are built, tested and run.

In my experience, junior engineers in their first couple of years still need a rounded set of experiences. The mix may change. The pacing may change. The amount of AI support may change. But the underlying developmental needs do not go away. Simon Wardley wrote about “Human AI System Integrator”, which is worth a read.

If I were designing an AI-native apprenticeship model, I would want people to get exposure to five things early: writing code, testing software, architecture, DevOps, and maintaining an application in production.

- They should still write some code

Not because they must outperform AI on raw code generation. They will not, and that is fine. They need to write code because that is how they build a mental model of how software actually works. What state is. What control flow is. Why edge cases matter. Why a tiny change in one place can produce very odd behaviour somewhere else – I remember my first experience with pointers in C ‘fondly’.

Yes, they can do that in hybrid teams with AI support. In fact, they probably should. The point is not purity. The point is learning. We still teach kids mathematics before handing them calculators. Not because calculators are bad, but because understanding matters.

I think the same applies here.

There is a fantastic book, New Dark Age, by James Bridle which argues that we are getting to a world that we don’t understand anymore and might lose control over. Let’s avoid that world and train our junior developers to still create code. It may also come in handy if we ever need to take control back.

- They need to learn how quality is evaluated

The goal for this is to understand how to evaluate quality. I do not mean an old-fashioned version of manual testing with pre-defined scripts. I mean they need to learn how to evaluate whether software is actually good enough. They need to interact with the application, put themselves in the shoes of an end-user. Explore edge cases. Triage defects. Understand what can go wrong at system level, not just at unit-test level. See performance issues. See data problems. See the difference between “it works on my machine” and “this is fit for purpose”.

A good tester, as the old line goes, looks both ways before crossing a one-way street.

That mindset will be even more important in the AI-assisted software delivery world.

- They need some architectural thinking

The context window for our AI agents will not contain the whole architecture of your organisation or even application and it shouldn’t as it would make things too complex and expensive.

This means somebody still needs to think about decoupling, interfaces, data ownership, API contracts, integration boundaries, duplication of data, failure modes and long-term maintainability. Somebody still needs to ask whether the thing being optimised locally is possibly making the wider system worse.

That does not mean every junior needs to become a formal architect. It does mean they need some exposure to architectural decision-making, because otherwise they may become very efficient at generating change without understanding the shape of the application they are changing and how it fits into the overall architecture.

That is not modern engineering. That is just fast damage.

- They need DevOps experience

Perhaps not surprisingly, I think this area is the one people are not paying enough attention to in the current AI craze.

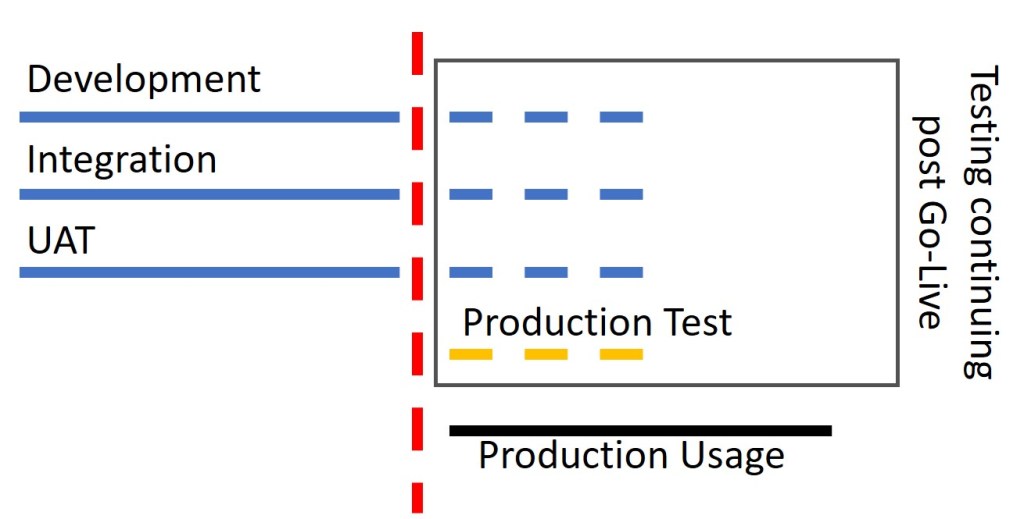

CI/CD, environment management, deployment automation, release predictability, test data, observability, rollback, all of that boring and beautiful machinery: this is where a lot of software delivery either becomes reliable or a road to disaster.

Many projects fail not because the team could not write code, but because the path from code to production is messy, slow or fragile. AI will help here as well, I am sure. But that does not remove the need to understand the principles.

Good engineers need to know how to make change safe, repeatable and visible.

- They need to support software in production

Of course, an application is only successful when it works in production, so this one matters enormously.

A system is not successful because it passed a demo or completed a sprint. It is successful when it works in production for real users under real conditions.

That means junior engineers should spend time maintaining applications, reading logs, looking at traces, understanding incidents, following operational signals, and dealing with the messy feedback that only arrives once software is actually in use.

Production is where bad assumptions become expensive.

That experience changes how people think. It gives them a healthier respect for reliability. It teaches them that elegance in design is lovely, but usefulness in production is the actual point.

And frankly, it is very hard to build good judgment without spending at least some time there and picking up some scars.

So yes, I think we should still invest in junior engineers

But if AI removes a chunk of the routine work people used to learn from, then organisations will have to design learning more deliberately. They cannot assume it will happen accidentally on the side. The old model relied on juniors doing a lot of the simpler BAU work. If that BAU work is now handled differently, then capability-building has to become a more conscious investment.

And that is the bit that may be more difficult to argue in some boardrooms. From a narrow productivity lens, it is tempting to ask: if AI can do the beginner work, why keep hiring beginners? And the answer is that beginner work was never just about productivity. It was part of how we created the skills that we relied on later.

If you optimise that away without replacing its training function, you may get a few very productive years. And then one day you discover you have plenty of AI-generated artefacts, a handful of exhausted seniors, and far too few people with the judgment to run the whole thing.

I do not think that future is inevitable…yet. But I do think it is plausible.