This is a story about a software factory that no one built on purpose. It began as a small team’s attempt to move faster — to automate the repetitive, to delegate the tedious, to let machines handle what machines could handle. That is how most of these stories begin. What follows is an account of one year in the life of that factory and the woman who ran it: what she built, what she lost track of, and what she eventually came to understand about the thing she had made.

—

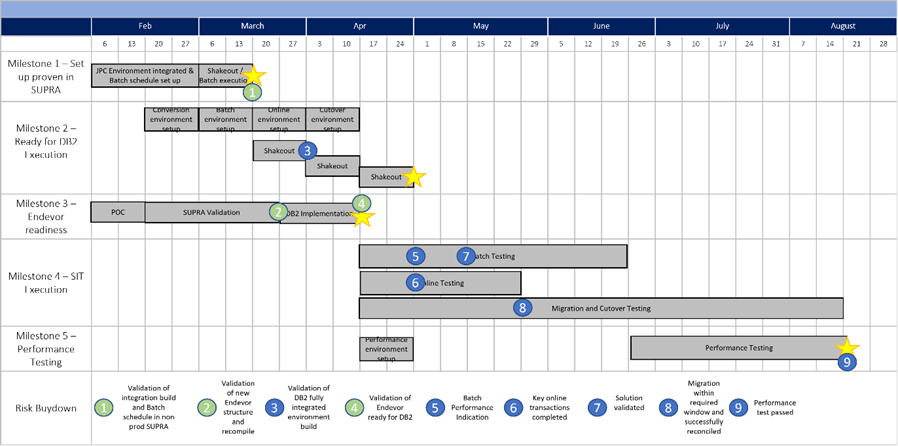

At the start, the entire operating model was captured in four enormous instruction documents and a single configuration file. Elise and her small team wrote the first two in March, when the “factory” was still little more than a prototype: a coding assistant connected to a deployment tool.

The third document was generated in April, mostly through a long back-and-forth with an AI assistant. The fourth she does not remember writing at all. When she checks the change history, the author is not a person’s name, just an arbitrary machine-generated identifier. She had approved the change request while scrolling through her phone.

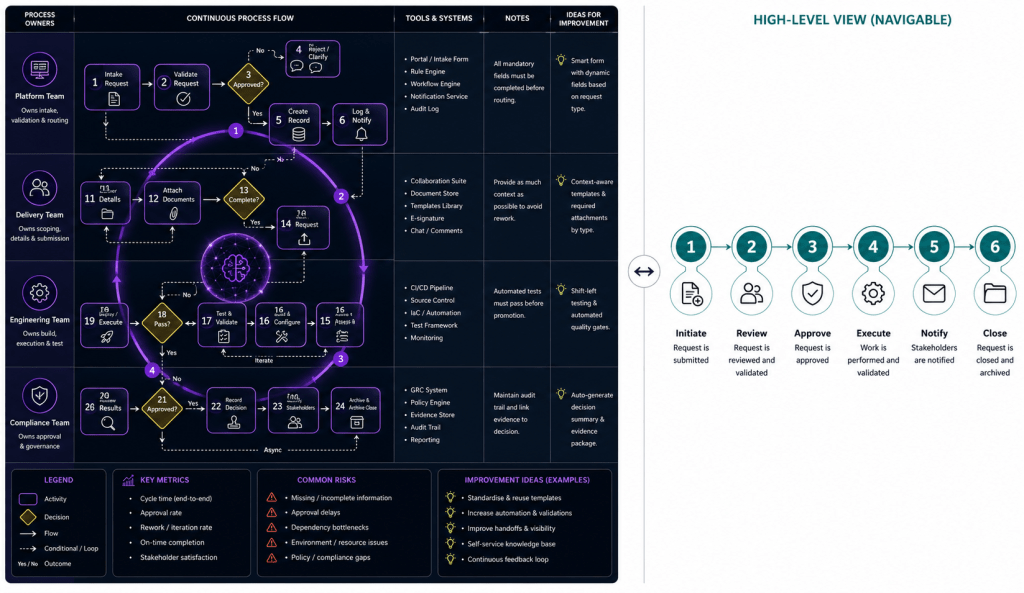

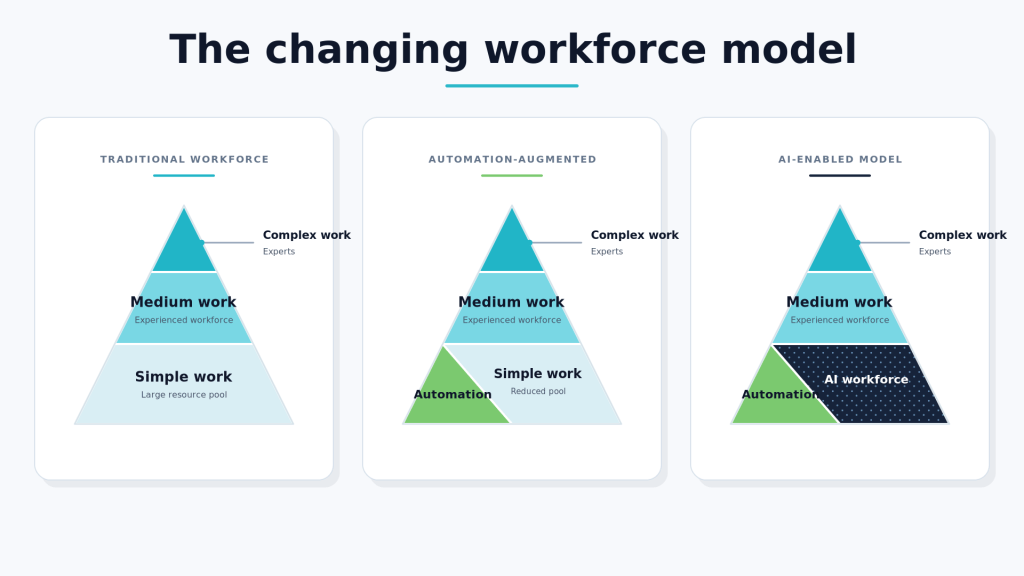

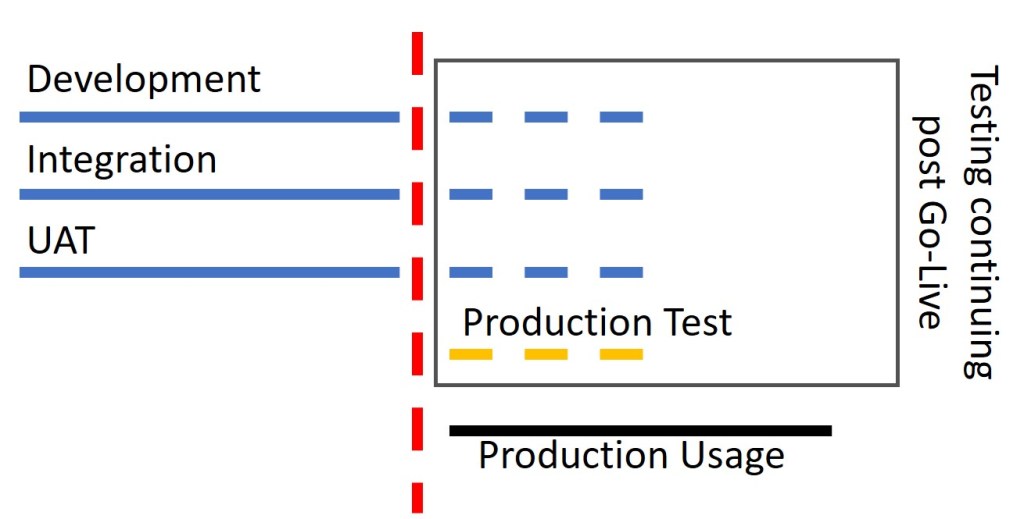

By July, the factory had spread into four layers of AI-driven activity. Each layer had its own automated processes, but they were all coordinated by a set of relatively fixed workflows — almost like a simulation of a software organisation. Everything was controlled through a fifth layer: a simple conversational interface that Elise and her team used to tell the factory what they wanted.

Those high-level instructions were broken down by a coordination layer into smaller tasks, then routed to the right part of the system. Most of the work was done by short-lived AI workers: small autonomous agents spun up for a specific task, placed inside temporary digital workspaces, and shut down once the task was marked complete.

Underneath that workforce was an integration layer. It connected the factory to the surrounding tools: source code repositories, deployment pipelines, monitoring dashboards, testing systems, and external services. These connections were wrapped behind clean internal interfaces, so the upper layers did not need to know which tool or vendor sat underneath.

A handful of specialist AI agents moved along this integration layer, packaging useful patterns into reusable capabilities. If the coordination layer needed one of those capabilities, it could call it on demand.

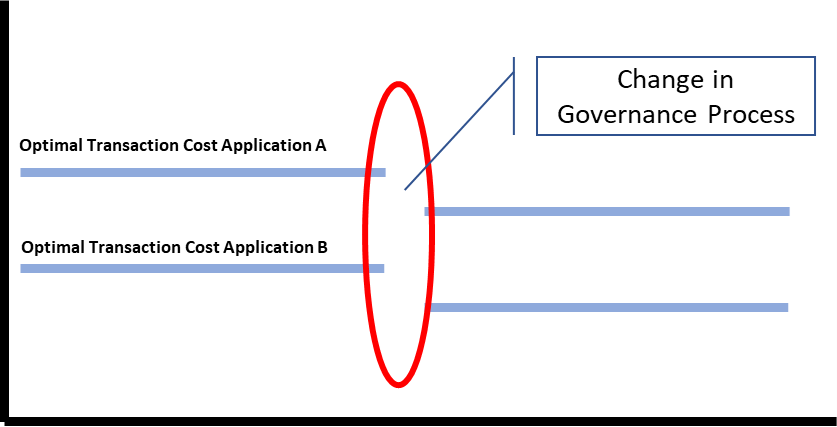

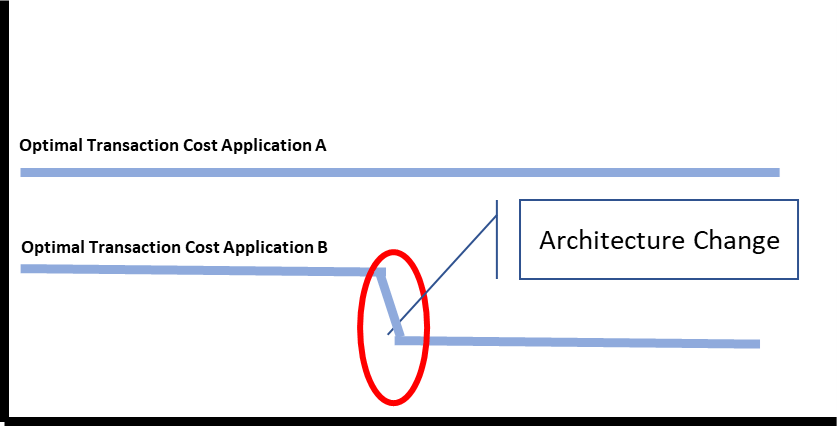

Change requests appeared in the team’s messaging channels. Human engineers reviewed them uneasily, increasingly aware that they no longer fully understood how the application was organised. Eventually, most of the changes were approved and pushed into production.

This phase of the factory was held together by a chaotic event-driven nervous system. A request became a task. A task became a plan. A plan became a set of assignments. Assignments produced completion messages. Completion messages triggered more tasks. Everything generated logs.

At night, Elise would scroll through those logs in the same half-awake way someone might wander through an online encyclopedia: one link leading to another, each entry interesting but only partly meaningful.

Her relationship to the platform had changed completely. The applications and services she supposedly owned had become abstract and strange to her. She no longer understood them as codebases or systems. But she could recognise the behavioural signatures of the management layers above them. She knew when the factory was hesitating. She knew when it was improvising. She knew when it was hiding complexity from her.

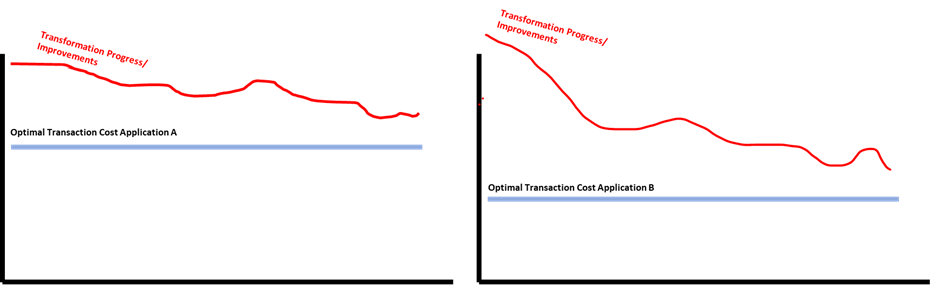

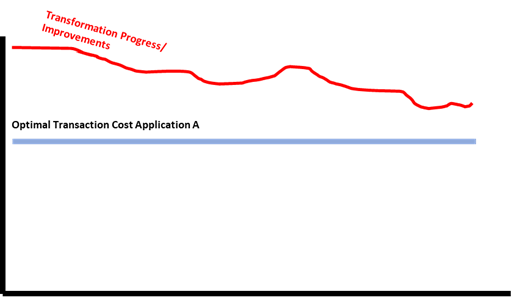

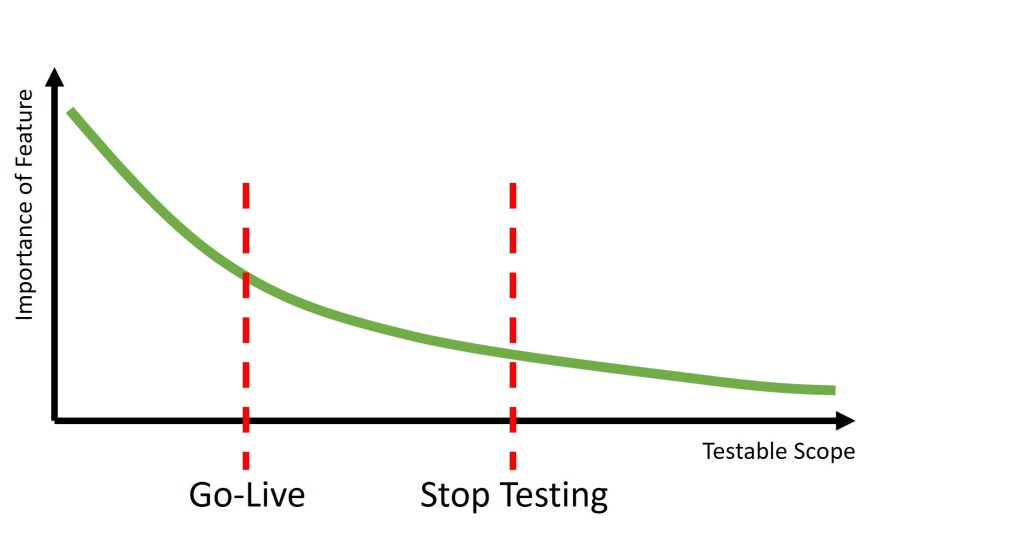

By September, Elise’s team had built an autonomous evaluation service. It did not simply recommend better options; it learned from outcomes. New software was released almost directly into a production environment that mixed simulated users with real customer interactions.

By then, most customer activity was no longer human-to-system. It was machine-to-machine. Customer agents talked to company agents. Buying, testing, requesting, complaining, and renewing all increasingly happened through automated intermediaries.

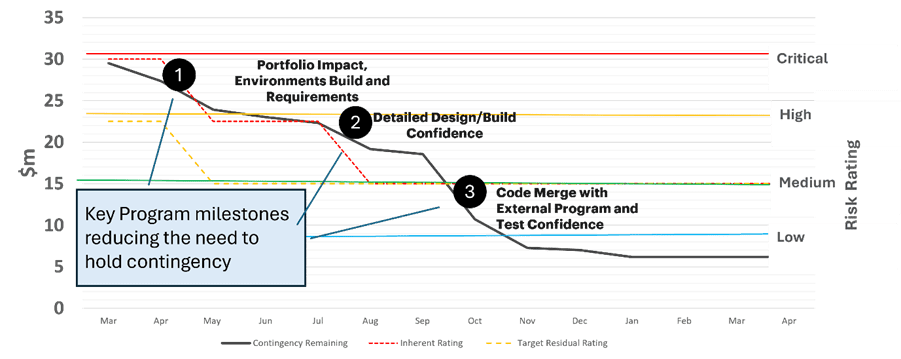

Minor problems were handled automatically. The system would fall back to a safer path, analyse what had happened, write a brief internal postmortem, and feed the lesson straight back into a code or configuration change.

Serious problems were treated differently. If a failure violated the core instructions of the factory, the system tightened the rules, isolated the routines involved, created a separate “satellite factory” to explore a safer approach, and gradually retired the faulty process.

They had long since stopped using a traditional event logging service. The logs were still produced, but nobody really understood them. It took longer to diagnose a problem by reading the history than it did to let the factory’s own immune system respond.

The factory still depended on many third-party tools, but often in ways their creators had probably never intended. The codebase had become like Swiss cheese: full of holes, switches, flags, and conditional paths. Almost every core behaviour, feature, and rule was assembled only at the last possible moment, depending on the situation.

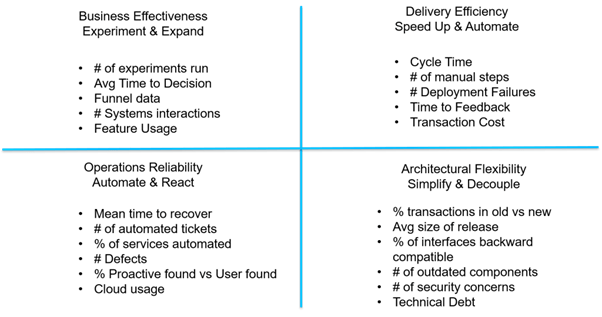

By the end of the year, Elise’s engineering team had almost entirely changed shape. They were no longer mostly programmers. They had become designers, architects, systems thinkers, and behavioural analysts.

The only surface everyone truly worked on was the specification: the set of written instructions that told the factory what mattered, what was allowed, what was forbidden, and what counted as success.

The architects and designers focused on trend forecasting and strategic partnerships. Their ideas could not be added to the specification directly. All write access went through two game theorists and a small group of statisticians, who had become the formal gatekeepers of the factory’s intent. The game theorists shaped the rules. The statisticians built the mechanisms to evaluate whether the factory’s output was actually doing what the rules intended — a distinction that had turned out to matter enormously.

Other specialists were there mostly to observe and document the game theorists. There was also an unspoken understanding that, if the game theorists began to behave strangely, someone in the room was expected to contain them.

Meanwhile, the broader internet was becoming less reliable. Large cloud platforms were going down more often. Security breach notifications had become constant background noise for every user. In response, a new class of security tools had emerged that deliberately added noise, leaks, and confusion as a defensive tactic.

Elise’s team noticed a strange rhythm in the factory’s specification changes. For a week, almost nothing would happen. Then, for twenty-four hours, the specification would light up with revisions.

The rhythm felt tidal, but wrong — as though there were two moons pulling at it.

Most of the changes were not caused by instability inside the factory itself. They seemed to be responses to the outside world: outages, market shifts, new security threats, changes in customer behaviour, or signals from other automated systems.

Around Christmas, the internet effectively died twice. The social disruption was catastrophic.

The business, somehow, continued to do well. In fact, it was growing. But it became harder and harder to explain what the business actually did.

Customers appeared through a machine-to-machine purchasing ledger. When their usage reached meaningful scale, the sales team reported increasingly bizarre conversations with the human representatives on the other side. Those people often struggled to explain what they were buying, or why. They were mostly reading instructions that had been handed to them by their own hidden factories.

Elise could not shake the suspicion that these dark factories were beginning to assemble themselves into something larger.

Not by design.

Not by anyone’s design.

But still, unmistakably, together.

NOTE – This is based on, and heavily influenced by, an article by Marek Poliks!