The Dunning Kruger effect (a.k.a. Illusionary Superiority)

If you attended any of my conference talks or spoke to me about maturity models, you would have heard me mention Dunning Kruger. Yet to this day I have not written a blog post about it. It’s well overdue as it is the single best explanation for the behaviours I see in our industry and the source of many of my frustrations. Let’s take a good hard look at ourselves in the mirror.

What is the Dunning-Kruger effect?

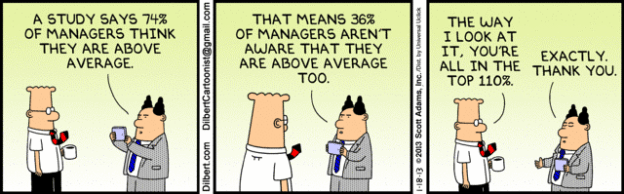

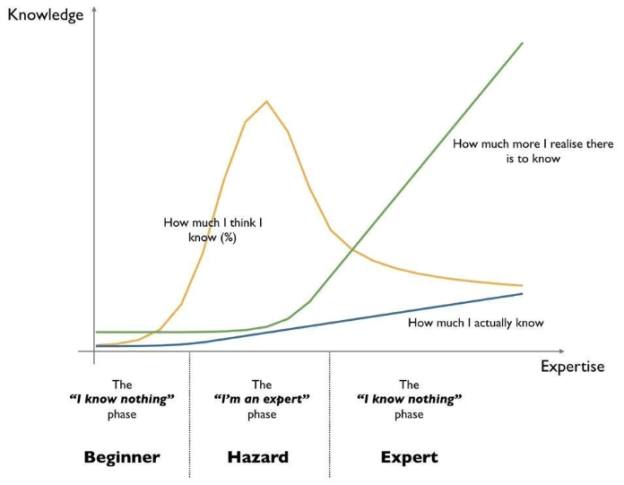

The Dunning Kruger effect says that people who are not that knowledgeable about a certain area actually believe that they are quite good at it. Perhaps we start with a few examples:

- 90% of drivers consider themselves to be above average – Daniel Gilbert Stumbling on happiness

- 94% of college teachers consider themselves to be above average – Daniel Gilbert Stumbling on happiness

- In a survey of faculty at the University of Nebraska, 68% rated themselves in the top 25% for teaching ability. – Wikipedia

- In a similar survey, 87% of MBA students at Stanford University rated their academic performance as above the median. – Wikipedia

- How do you think people would rate you as a leader?” It turns out that 74% of the respondents think they’re either above average or the best leader their people have ever had. – SmartBrief on Leadership

- Or consider Lake Wobegon where all children are above average 😉

Perhaps it is best explained by the anecdote that gave it the name, which inspired the original research from Dunning and Kruger:

A man decided rob a bank and did his research to prepare the big heist. He then entered the bank robbed the bank of it’s money and made his way home happy to see everything going smoothly. When he arrived at home the police was already waiting for him and arrested. The man was shocked. In his research he had found out that lemon juice can be used as invisible ink, and so he covered himself in lemon juice to be invisible to the people and cameras due to the invisible ink…

Is Dunning Kruger really a thing?

You might wonder whether Dunning-Kruger is really a thing and why it is important for you. Let me tell where I came across it most often. I do a lot of assessment work to understand where organisations are in their DevOps journey and there is one aspect that keeps baffling me: Continuous Integration. Here is a pretty common dialog that I experience with someone from the development of an organisation, let’s call him Adam:

Mirco: Do you practice Continuous Integration?

Adam: (with a little hesitation) Yes we do.

Mirco: (thinking I could stop here and tick the box, but am curious) How do you know that you are doing CI?

Adam: We have CI server and are using Jenkins.

Mirco: How often does the CI server build your software?

Adam: We run it once a week fully automatically.

Mirco: How often are your developers checking in code?

Adam: Multiple times a day.

Mirco: Does your CI server run cover static code analysis and automated unit tests?

Adam: No.

(…)

I don’t believe that people have the intention of lying in this scenario, I think Dunning-Kruger is working its magic here. I was baffled by seeing this pattern again and again. Once I learned about Dunning-Kruger things fell into place.

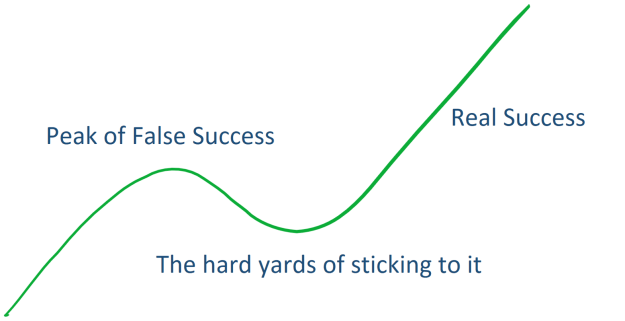

Once you know it, you will see it everywhere in the DevOps and Agile world. People believing they are done when they are just at the beginning. People attending conference talks and rather than understanding the difference picking up only the things they have in common to validate their self-evaluation.

This also means that self-assessments are potentially dangerous and that maturity models can be misleading. It might also make you question the validity of some of the surveys you see out there. Well, it might make you doubt yourself? Am I really doing this right or am I falling into Dunning-Kruger?

I think the most important takeaway is that we need to talk more. We need to try to understand the context and the situation much better before we do an evaluation. We need to look for others to take an external look at our situation and compare it as objectively as possible. We need to provide more education for our people as a recipe against Dunning-Kruger. As an alternative to the usual maturity model I once created a “Civilisation”-inspired technology tree for Continuous Delivery with one of my clients, you can read more about this here.

Rather than looking for maturity models, perhaps it is better to look at outcomes and use maturity models as a conversation starter with a coach to help us identify which steps to take next rather than an evaluation of our teams. It’s only one of the tools in our toolbag and we should not put too much emphasis on it.

I will leave you with this final picture that I came across, unfortunately I don’t know the origin (Update: Martin in the comments told me it’s from Simon Wardley), but it does describe the danger zone very nicely in which many people new to Agile and DevOps find themselves:

When I saw a physical copy of the book for the first time in the morning, it was an unreal feeling. There was certainly pride but also a level of disbelief. But damn does it look good 😉 I went on to an interview with Alan Shimmel (which you can find

When I saw a physical copy of the book for the first time in the morning, it was an unreal feeling. There was certainly pride but also a level of disbelief. But damn does it look good 😉 I went on to an interview with Alan Shimmel (which you can find

Happy New year to you all. I am now half-way through my paternity leave and I will have to admit that the “Father of the year” award might be slightly out of reach for me this year. I will get to that, but first let me tell you that I will write to the government and make it clear that this should not be called paternity leave. It is paternity work. I have so much respect for all those full-time parents, your day is fully organised by eating, putting little one to bed, cleaning up, making food, changing baby,… I am keeping up with the schedule but will admit that my wife sent me some handy SMS reminders during the day in the beginning. And then you need to use the few free moments to get the basics done: Shower, eat, check on the Ashes and soccer results,…

Happy New year to you all. I am now half-way through my paternity leave and I will have to admit that the “Father of the year” award might be slightly out of reach for me this year. I will get to that, but first let me tell you that I will write to the government and make it clear that this should not be called paternity leave. It is paternity work. I have so much respect for all those full-time parents, your day is fully organised by eating, putting little one to bed, cleaning up, making food, changing baby,… I am keeping up with the schedule but will admit that my wife sent me some handy SMS reminders during the day in the beginning. And then you need to use the few free moments to get the basics done: Shower, eat, check on the Ashes and soccer results,…

After writing about behaviours that help new joiners to be successful I finally got around to write about managers. What does a successful manager look like. And here I don’t mean specific practices like the ones you can learn from

After writing about behaviours that help new joiners to be successful I finally got around to write about managers. What does a successful manager look like. And here I don’t mean specific practices like the ones you can learn from  The good news first: our little one is still alive 😉 All the abstract talk about being a full-time dad has now become my reality. We do not have family around us here as our families live in Sri Lanka and Germany respectively, so there is not a lot of help available. I have been pretty hands-on before as well, getting the little man to sleep, making him food and feeding him and of course changing lots of, let’s say smelly, diapers. But that has been nothing in comparison with being a full time dad.

The good news first: our little one is still alive 😉 All the abstract talk about being a full-time dad has now become my reality. We do not have family around us here as our families live in Sri Lanka and Germany respectively, so there is not a lot of help available. I have been pretty hands-on before as well, getting the little man to sleep, making him food and feeding him and of course changing lots of, let’s say smelly, diapers. But that has been nothing in comparison with being a full time dad. time meeting many of my colleagues and looking around the modern office. Of course his daily routine got disrupted by our little adventure. So far I am enjoying the experience and the world at work is not coming to an end. It was a slow letting go of work as I spoke to someone at work every day. Next week I will hear less from work I think. Let’s see. The weather is certainly incentivising me to spend more time outside in parks and cafes, that’s a performance objective I am happy to work on…

time meeting many of my colleagues and looking around the modern office. Of course his daily routine got disrupted by our little adventure. So far I am enjoying the experience and the world at work is not coming to an end. It was a slow letting go of work as I spoke to someone at work every day. Next week I will hear less from work I think. Let’s see. The weather is certainly incentivising me to spend more time outside in parks and cafes, that’s a performance objective I am happy to work on…

Barry O’Reilly spoke about the Lean Enterprise – Overall a great and entertaining talk. The one thing that stood out to me was the “delivery gap” which just shows how bad companies are in evaluating themselves – and for that matter how bad people are evaluating themselves ( remember Dunning-Kruger effect).

Barry O’Reilly spoke about the Lean Enterprise – Overall a great and entertaining talk. The one thing that stood out to me was the “delivery gap” which just shows how bad companies are in evaluating themselves – and for that matter how bad people are evaluating themselves ( remember Dunning-Kruger effect). Near religious wars have been fought over which IT product to choose for a project or business function. Should you use SalesForce, SAP or IBM? I am not a product person, but I have learned over time that just looking at the functionality is not sufficient anymore. It is very unlikely that an organisation will use the product As-Is and the application architecture the product is part of will continue to evolve. The concept of an end-state-architecture is just not valid anymore. Each component needs to be evaluated on the basis of how easy it is to evolve and replace. Which is why architecture and engineering play a much larger role than in the past. This puts a very different view on product choice. Of course the choice is always contextual and for each company and each area of business the decision might be different. What I can do though is to provide a Technology Decision Framework that helps you to think more broadly about technology choices. I wrote about DevOps tooling a while ago and you will see similar thinking in this post.

Near religious wars have been fought over which IT product to choose for a project or business function. Should you use SalesForce, SAP or IBM? I am not a product person, but I have learned over time that just looking at the functionality is not sufficient anymore. It is very unlikely that an organisation will use the product As-Is and the application architecture the product is part of will continue to evolve. The concept of an end-state-architecture is just not valid anymore. Each component needs to be evaluated on the basis of how easy it is to evolve and replace. Which is why architecture and engineering play a much larger role than in the past. This puts a very different view on product choice. Of course the choice is always contextual and for each company and each area of business the decision might be different. What I can do though is to provide a Technology Decision Framework that helps you to think more broadly about technology choices. I wrote about DevOps tooling a while ago and you will see similar thinking in this post.