It is kind of difficult to walk the DevOps circles without hearing the word Microservices being mentioned again and again. I have sat through a bunch of conference talks about the topic and only recently came across a couple that were practical enough that I took things away from that have applicability in my normal project work. The below is heavily influenced by the talks at this year’s YOW conference and the talks at the DevOps Enterprise summit of the last two years.

It is kind of difficult to walk the DevOps circles without hearing the word Microservices being mentioned again and again. I have sat through a bunch of conference talks about the topic and only recently came across a couple that were practical enough that I took things away from that have applicability in my normal project work. The below is heavily influenced by the talks at this year’s YOW conference and the talks at the DevOps Enterprise summit of the last two years.

What are Microservices?

Microservices are the other extreme of monolithic applications. So far, so obvious. But what does this mean. Monolithic applications look nice and neat from the outside, they behave very well in architecture diagrams as they are placeholders for “magic happens here” and some of the complexity is absorbed into that “black box”. I have seen enough Siebel and some SAP code that tells me that this perceived simplicity is just hidden complexity. Microservices make the complexity more visible. As far as catchy quotes go, I like Randy Shoup’s from YOW15: “Microservices are nothing more than SOA done properly.” Within this lies most of the definition of a good Microservice: It is a service (application) that is for one purpose, it is self-contained and independent, has a clearly defined interface and isolated persistence (even to the point of having a database per service).

An Analogy to help:

“This, milord, is my family’s axe. We have owned it for almost nine hundred years, see. Of course, sometimes it needed a new blade. And sometimes it has required a new handle, new designs on the metalwork, a little refreshing of the ornamentation . . . but is this not the nine hundred-year-old axe of my family? And because it has changed gently over time, it is still a pretty good axe, y’know. Pretty good.”

This is what Microservices do to your architecture…

What are the benefits of Microservices?

Over time everyone in IT has learned that there is no “end-state architecture”. The architecture of your systems always evolves and as soon as one implementation finishes people are already thinking about the next change. In the past the iterations of the architecture have been quite difficult to achieve as you had to replace large systems. With microservice you create an architecture ecosystem that allows you to change small components all the time and avoid big-bang migrations. This flexibility means you are much faster in evolving your architecture. Additionally the structure of Microservices means that teams have a larger level of control over their service and this ownership will likely see your teams become more productive and responsible while developing your services. The deployment architecture and release mechanism becomes significantly easier as you don’t have to worry about dependencies that need to be reflected in the release and deployment of the services. This of course comes with increased complexity in testing as you have many possible permutations of services to deal with, so automation and intelligent testing strategies are very important.

When should you use Microservices?

In my view Microservices are relevant in areas that you know your company will invest in over time. Areas where speed to market is especially important are a good starting point as speed is one of the key benefits of Microservice where dependency-ridden architecture get bogged down. This is due to many reasons from developers having to learn about all the dependencies of their code to the increasing risk of component to be delayed in the release cycle. Microservice wont have those issues. Another area to look for is applications that cannot scale vertical much longer in an economic fashion, the horizontal scaling abilities of microservices specific to those services increases the possibilities to find economic scaling models. And of course a move towards Microservices requires investment, so go for an area that can afford the investment and where the challenges mentioned above are providing the burning platform to start your journey.

What does it take to be successful with Microservices?

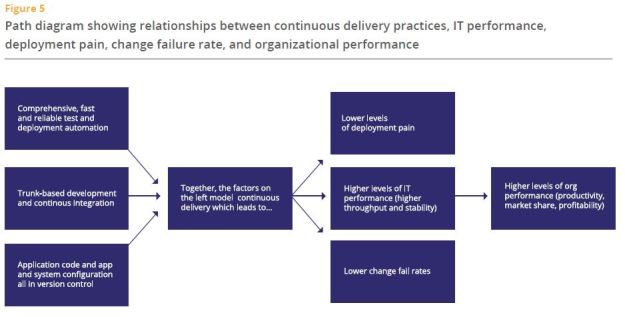

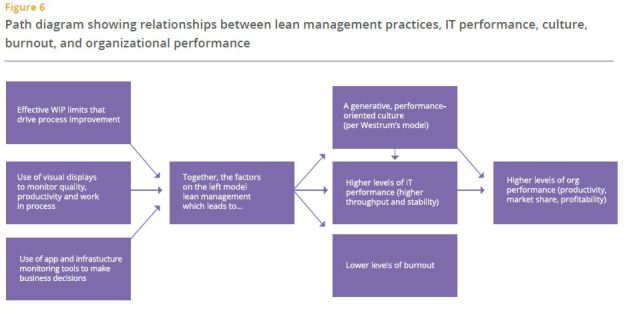

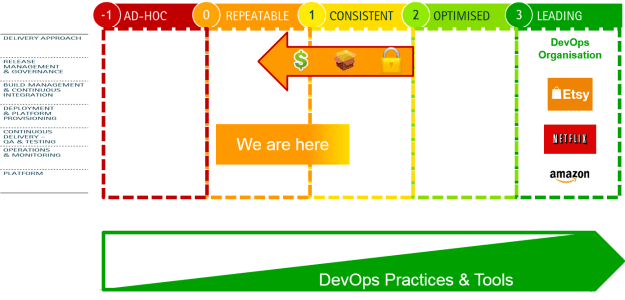

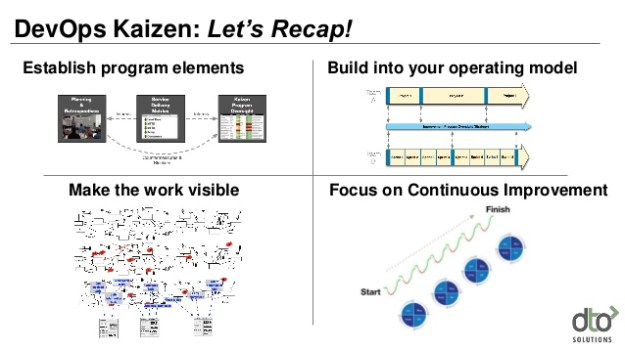

This will not surprise you, but the level of extra complexity that comes with independently deployable services which then also might exist in production in multiple versions, means you need to really know your stuff. And by this I mean you need to be mature in your engineering practices, have a well oiled deployment pipeline with “Automated Everything” (Continuous Integration, Deployment, Testing). Otherwise the effort and complexity in trying to maintain this manually will quickly outweigh the benefits of Microservices. Conway’s Law says that systems resemble the organisational structure they were build in. To build Microservices we hence need to have mastered the Agile and DevOps principle of Cross-Functional teams (and ideally they are aligned to your value streams). These teams have full ownership of the Microservices they create (from cradle to grave). This makes sense if the services are small and self-contained as having multiple teams (DBAs, .NET developers,…) involved would just add overhead to small services. As you can see my view is that Microservices are the next step of maturity from DevOps and Agile as they require organisations to have already mastered those (or being close to at least).

How can you get started?

If your organisation is ready (which similarly to Agile and DevOps is a prerequisite for the adoption of Microservices) go ahead and choose a pilot and create a Microservice that adheres to the definition above and is of real business value (e.g. something that is being used a lot, is customer facing and is in a suitable technology stack). Your first pilot is likely not going to be a runaway success, but you will learn from the experience. Microservices will require investment from the organisation and the initial value might not be clear cut (as just adding the functionality to the monolith might be cheaper initially), but in the long term the flexibility, speed and resilience of your Microservice architecture will change your IT landscape. Will you end with a pure Microservice architecture? Most likely not. But your core services might just migrate to an architecture that is build and designed to evolve and hence serve you better in the ever changing marketplace.

Now – over to you. Let me know what you have learned by using Microservices, whether you think the above is true or did you have different experiences. Looking forward to hear what the current state of play is in regards to Microservices.

Last but not least some references to good Microservices talks:

Randy Shoup https://www.youtube.com/watch?v=hAwpVXiLH9M

James Lewis https://www.youtube.com/watch?v=JEtxmsJzrnw

Jez Humble https://www.youtube.com/watch?v=_wnd-eyPoMo